How AI Voice Receptionists Work (And Why They Sound Human)

"Did I Just Speak to a Real Person?"

I get this from customers fairly regularly. A garage owner will tell me his customer rang up, had a proper conversation, booked an MOT — and then later asked him: "Was that person new? I haven't spoken to her before."

There was no person. It was the AI.

That reaction used to surprise me. It doesn't any more. The technology has moved very fast in the last two years, and "sounds like a robot" is genuinely no longer an accurate description of what we're building. So let me explain how it actually works — without the technical jargon.

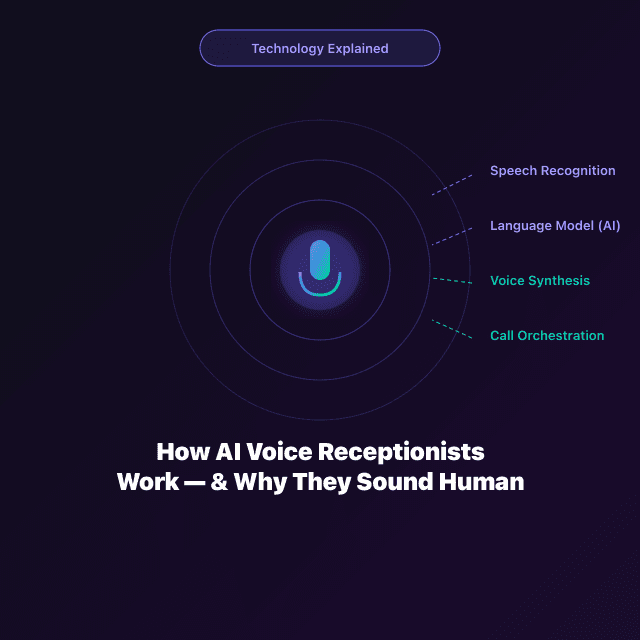

The Four Layers

Layer 1: Speech Recognition — Hearing the Caller

When someone speaks, the audio gets transcribed into text in real time. FlowEdge AI uses Deepgram's Nova-2 model, which is trained specifically for British English — including regional accents, trade vocabulary, and the slightly degraded audio quality you get on a mobile call.

This is why it understands "MOT" and "boiler service" and "can you fit me in on Thursday" — it's not doing a generic English transcript. It's been trained on language your actual customers use.

That transcription happens in under 200 milliseconds. Fast enough to feel like a real conversation.

Layer 2: Language Understanding — the Brain

The transcribed text goes to a large language model. We use Anthropic's Claude Sonnet — one of the best conversational AI models available right now.

But here's the key thing: it's not just a general AI talking to your customers. It's been given a detailed brief specifically about your business. Your services, your prices, your opening hours, how you handle bookings, your personality. So when someone asks "how much is a full service?", the AI answers with your actual price — not a vague "it depends." And then it naturally follows up with "would you like to book one in?"

That's what makes it feel like a real conversation rather than a phone menu.

Layer 3: Voice Synthesis — Speaking Back

The AI's text response gets converted into speech using ElevenLabs — genuinely the best voice synthesis technology available at the moment. FlowEdge AI has a selection of British voices, male and female, with different characteristics.

These are not the robotic monotones from old text-to-speech. They have natural rhythm, appropriate pacing, tonal variation. A trained voice actor listening closely can usually spot AI. A garage customer calling to book an MOT? Almost never.

Layer 4: Call Orchestration — Managing the Whole Thing

All of this is coordinated by Vapi.ai, which handles the real-time audio stream, manages turn-taking (knowing when the caller has finished speaking), and keeps latency low so the conversation flows naturally even on variable phone connections.

What It Can and Can't Do

It can:

- Answer questions about your services, pricing, availability, and location

- Book appointments into your live calendar

- Take detailed messages for callbacks

- Handle multiple simultaneous calls with no queue

- Work at 3am on a bank holiday with the same quality as 10am on a Tuesday

- Send SMS confirmations after every call

- Give you full transcripts of every conversation

It can't (yet):

- Handle deeply emotional or distressed conversations with the same warmth a human would

- Navigate genuinely novel, unpredictable situations outside its configured knowledge

- Replace a senior person who knows your business and your long-standing customers personally

For 80–90% of calls a typical UK service business receives, the AI handles start to finish. The rest get a detailed transcript so your team can follow up properly.

"Will There Be an Awkward Pause?"

This is the question I get asked most. No — not with modern infrastructure. Our typical response latency (the time between the caller stopping speaking and the AI starting its reply) is 600–900 milliseconds. That's within the range of a normal conversational pause. Callers don't notice it.

What Happens to the Data?

The audio isn't stored permanently. What's stored is the transcript — a text record of the conversation, encrypted, in your dashboard, accessible only to you. In accordance with UK GDPR. No audio files sitting around, no customer conversations floating in the cloud indefinitely.

The Short Version

An AI voice receptionist in 2026 is not the robotic phone menu from 2010, and it's not science fiction. It's a set of mature, production-ready technologies — the world's best speech recognition, the world's best language models, the world's best voice synthesis — working together to answer your phone and book your appointments.

The technology works. The question is just whether you're using it.